- How do we design a survey?

- Choose the survey type

- Identify survey respondents

- Decide on the anonymity of the survey

- How do we write the content of a survey?

- Write survey questions

- Choose appropriate response options

- How do we structure the survey?

- Consider the length

- Be mindful of the order of questions

- Clarify and guide where needed

- How do we test a survey?

- Materials & templates

- Key terminology

Design the Survey

Maximise survey responses, drive positive change, and transform engagement through effective data collection.

How do we design a survey?

The design of your survey is an integral part of a successful stakeholder feedback exchange. This is the tool you’ve chosen to figure out the key elements related to your goal, so it must be purpose-driven, well-rounded, and provide you with the types of data you are looking for.

Choose the survey type

Is the topic something you are exploring for the first time or is this going to be a continuation of some work that you’ve done previously? This is where you commit to either a new or repeat survey. Once you know this, you can start working on other considerations for the survey you wish to create.

A general feedback survey that covers a broad range of topics that will help you see the current state of things at your school or trust. This type of “experience survey” will help you identify strengths and areas for improvement and is great for strategising future growth or vision plans or reviewing the impact of previous work.

Some survey examples include:

- annual surveys

- KPIs

- overall experience surveys

- vision/mission checks

- alignment surveys between parents, pupils, and staff.

To get a broad overview of how each group is doing.

Large range of questions around their overall experience, covering many parts.

Most organisations wouldn’t do this more than once a year.

Earlier collected evidence or a general sense of the current situation has helped to identify a problem for stakeholders. This type of hypothesis-driven survey will give you concrete answers to a specific topic that will help you prioritise your next steps to see improvement.

Survey topics may include:

- safeguarding

- teaching & learning

- event feedback

- workload

- feedback on upcoming changes

To get an insight into a specific area.

Fewer questions, with a shared focus on a topic of inquiry.

Most organisations do this as needed when something emerges from a Diagnostic survey or through other discoveries.

Measure a limited number of small things frequently to monitor changes more closely. For example, some focus topics from the general diagnostic survey.

These surveys allow you to measure how initiative X is perceived to get a quick response.

To ask something specific and to get a quick response.

Focused, small set of questions with a specific topic in mind.

Regular intervals to monitor changes to stakeholder feelings about the topic, like measuring how implemented actions are working.

Identify survey respondents

Who needs to fill out the survey to give you the answers and clarity you need? In schools and trusts, usually, these are pupils, staff or parents. It’s important to know who will fill out your survey to ensure you use the appropriate invitation, questions, language, technology, and timing to gain their insight.

Identifying the target survey respondents will make sure that the correct questions go to the correct people who will provide us with the most useful data about the topic we’re interested in.

Survey topic

KEY STAKEHOLDERS (receiving information about):

Staff members

RESPONDENTS (giving information)

Staff members or other possible respondents:

- Pupils (to ask about behaviour which may be affecting staff wellbeing)

- Parents (to ask for views on levels of trust and respect for school staff)

Within each survey, we recommend you include a few demographic questions, which will help you with analysis later in the process. To deepen the survey analysis and your understanding of the stakeholder groups, it is valuable to also add additional demographic questions on protected characteristics like gender, ethnic group, disability, sexual orientation, etc., which we currently ask to students and staff.

Ask yourself: “To understand the context and be able to enact change in your school/trust, how many perspectives do you need?”. There is most likely one key respondent group that is directly related to the focus of your survey goal. This respondent group will be able to give you the most accurate insights into how they are doing in regard to your topic of interest.

By surveying multiple respondent groups, you will expand the various perspectives on the topic and will have more ways to analyse and triangulate the results. This is a bigger undertaking for sure, but it is worth evaluating the potential impact this can have on your ability to identify key problems and where to go from there.

Keep in mind the particular needs of each possible respondent group:

- Pupils: consider their year level and ability to read and understand survey questions, and provide responses. At Edurio, we create simpler and shorter surveys for primary pupils. Also, take into account the experiences of pupils and whether they will have something to say about the topics of your survey.

- Staff: staff in different roles will have different experiences and types of feedback they will be able to give you. If you’re surveying all staff, consider separate sections for more specific groupings of questions, for example, asking those roles who are regularly interacting with pupils about pupil behaviour.

- Parents: take into account the demographics of your parent and carers population, considering if there is a need for translation options of the survey. Remember to ask questions about areas that parents and carers will have had access to and experience in.

Decide on the anonymity of the survey

When any respondent is requested to provide their voice and reflection, one of their main concerns is “Who will see this and in what form?” While some individuals will be comfortable answering honestly, regardless of who sees their answers, others will be concerned about the protection of their attitudes and feelings for fear of a negative impact or reaction to them. It is therefore crucial to guarantee the anonymity of individual survey responses.

We, at Edurio, take this commitment exceptionally seriously and do our part to limit the identification of individual voices. We strongly recommend you make your surveys truly anonymous and avoid the complex dynamics that arise with identified surveys.

Anonymity provides:

The respondent with a sense of ease and contributes to greater honesty in their responses, and opens to a greater variety of voices in your organisation

Less biased responses due to personal relationships or power dynamics

Reduced likelihood of respondents giving socially desirable responses (telling what they think you want to hear)

Higher response rates mean that your results will have a better stakeholder body representation

How do we write the content of a survey?

Designing a good survey question primarily means

two things:

- The question is valuable to you

- The respondent can give a meaningful answer

#1Generate ideas

If you are creating your own questions for the survey (rather than customising or re-using questions from a previously built survey), we suggest gathering your team together and throwing out all the ideas that come to mind around the following prompts:

- What are the different areas to explore to get a clear and well-rounded picture?

- What are the most direct questions we can ask about this topic to get clear answers?

- What questions might help us understand the context?

- What questions might give us clear guidance about the best direction/solution for a situation?

In this step, don’t worry about the language or the type of the question, just ask it! This step is about getting all the ideas out so you have a starting point to design your survey.

Warning

Stick to only asking questions that will be valuable to you – ask about things that you don’t have answers to, or that help you validate your hypotheses, and that you can use to inform your actions post-survey. The questions you ask should be geared towards helping you achieve the goals you set out during planning. It can be tempting to include “interesting to find out” questions, but remember that every question you add is an additional bit of work for your respondent, as well as yourself when you’ll be exploring the data after the survey.

#2Focus your survey

Shift from the idea-generating stage to giving your survey structure and shape by:

- Placing similar questions together by theme;

- Removing duplicate questions so you can see the full list of questions;

- Ensuring you have a well-rounded question set for each of the themes you’re interested in;

- Naming the different themes/buckets of questions;

- Going back to your goal and discussing which themes are essential to get the information you need for this survey to be successful;

- Deciding which themes to keep and which to skip for this survey. Remember, more is not better when it comes to a survey!

Once you’ve identified your themes and have drafted questions, it’s time to dive into cleaning up the questions and deciding the best way to invite participants to answer them.

#3Question types

#4Key factors of question design

When writing your questions, focus on asking about the observations and feelings

of your respondents, taking into account:

Pro tips

Instead of this

Does your teacher organise his / her work well?

Use this

Does your teacher start his / her lessons on time?

Instead of this

Why does the school leadership treat you with respect?

Use this

How respected by the leadership do you feel? Why?

Instead of this

How often do you give and receive feedback with other pupils at your school?

Use this

- How often do you give feedback to other pupils at your school?

- How often do you receive feedback from other pupils at your school?

Instead of this

How often are your lessons disturbed?

Use this

How often are your lessons disturbed by external noise?

Instead of this

I am not disrespected by my teacher.

Use this

How strongly do you agree with this statement: I am respected by my teacher.

Instead of this

My class does not behave the way our teacher wants.

Use this

How strongly do you agree with this statement: My class behaves the way our teacher wants.

#4Think about the language

Be specific and intentional in the language you use and focus on the respondents’ point of view. As you’re writing the questions, ask yourself:

Is it clear? Should it be shorter/longer?

- Use clear wording without abbreviations or jargon.

- Be sure it is clear what time period you are referring to for each question (where relevant).

- Pay attention to nuance and context to give respondents a clear question they understand and don’t need to interpret.

- Make sure that every question applies to every respondent who is asked that question. If you have specific questions for a subset of the survey, some tools allow you to ask questions based on pre-loaded data or how respondents answer a question in the survey.

- When writing questions for pupils, adapt your questions to be appropriate for their age, reading level, and experience.

- Be sure that all respondents are fluent enough to answer questions in the language of the survey. Or, consider using a tool that offers your survey in a different language for those in your respondent group that may not be fluent in English.

All of these considerations will help you create questions that take less effort to answer, which can greatly improve the overall survey-filling experience of the respondent and, in turn, improve the quality and quantity of the data you receive.

Choose appropriate response options

As important as the quality of your question text is to get the key information from respondents, the type of response format that you choose to pair it with is equally vital. There are different types of response formats and each of them gives us various types of information.

At Edurio, we mostly create surveys using various types of multiple choice questions with a combination of open and demographic questions (demographics are explained in detail above).

Multiple choice questions

Multiple choice, also known as closed-ended questions, provide a list of responses to choose from and will give you quantitative information about the topic. Multiple choice questions can ask for a single or multiple responses to be selected out of the options presented.

The single-response format is mostly used for rating scale questions and usually asks the respondent to rate their experiences, attitudes, feelings, and observations on a response scale spanning from the most positive to the most negative response, but could also ask to select one neutral option that applies to them.

The multiple-response format typically asks the respondent to select multiple options that apply to them from a list where none of the choices presented are more or less positive than the others.

A couple of examples of a single-response question would be asking the respondent what is the number of hours they sleep at night on average and providing them with a list of numbers to choose from or, as a rating scale question, asking the respondent to select how rested they feel when they wake up on a scale from “Very rested” to “Not rested at all”.

An example of a multiple-response question would be asking the respondent to select which after-school activities they most often attend and then providing them with a comprehensive list from which they can select all that apply to them. Using closed-ended questions that are well made enable us to turn data into reliable and useful scores and percentages.

Open-ended questions ask for a written, free-form response. These can give a wealth of qualitative information but require a lot more effort from the respondent. Open-ended questions should be used purposefully and sparingly, for example, to get an in-depth understanding of a particular topic, to give respondents a chance to bring up whatever they feel like at the end of a survey, or even as a follow-up to a particular answer option provided by the respondent. Make sure you will be able to dedicate adequate time and effort to read and analyse them after the survey.

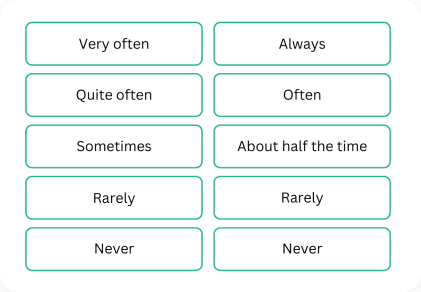

Why 5 response options is a good format

At Edurio we mostly use 5 response options for questions. When compared to a 3-point scale, the 5-point provides more granular information, which allows for more in-depth analysis where necessary. We often find the 7 response option to offer information that is too granular, requiring more time from the respondent than we believe is needed. As an odd-numbered scale, it also provides a natural midpoint between the most positive and the least positive answer options.

We understand that you want to get concrete answers to your questions. But we need to remember that respondents are people with their own experiences and feelings, therefore including “middle of the road” options is a part of life.

On rating scales, make sure the respondents have the option to select a neutral response option, for example, “Sometimes”, “Neither easy nor difficult” or “Moderately confident”, as we believe that being neutral about something is a valid part of the human experience and it’s important to have a chance to be reflected in the data.

Useful response categories

It’s essential that your question and response options are mutually exclusive and collectively exhaustive (also known as MECE), meaning that these groups do not overlap and that they cover all possible options in the given context.

For example, if you’re asking pupils about people they talk to about their troubles, make sure you create clear categories that don’t overlap (like “friends” and “ online friends”) and that reflect groups with whom they are most likely to interact. This means also accounting for the fact that you might not have thought of everybody and including an option “Someone else (please comment)” to give them an opportunity to share it.

To make the options truly MECE, take into account that maybe they don’t share their troubles much outwardly and add an option “Nobody”. Give respondents the option to provide a response like “Other”, “Don’t know”, or “Not applicable” where applicable or to not reply at all. For instance, for the question, “When you feel sad or worried, who do you talk to?”…

Instead of this

Friends

Parents

Family

Teachers

Friends online

Use this

Classmates

Friends outside of school

Online friends

My parents

My siblings

Teachers

Someone else (please comment)

Nobody

How do we structure the survey?

Once we have our questions and answer formats sorted out, it’s time to think about making the surveying experience as pleasant as possible for the respondents. The respondent burden is the effort that it will take for respondents to complete a survey from start to finish. Lessening the burden can improve the chances of respondents giving quality feedback and increase their satisfaction about having taken part in the survey.

Some of the elements contributing to respondent burden will be beyond your control, but being clear about the contents of the survey, why this feedback is important to you, and how you plan to use it, will help them be more committed to participating fully.

#1Consider the length

The length of the survey can have a direct impact on the response and completion rates. The longer the survey, the less likely respondents will feel inclined to complete it or to start it altogether. In our experience, 60 questions or less is an optimal length for a diagnostic type of survey as it allows adults to complete in about 15 minutes, without it having a negative impact on the quality of the data received.

If your respondents are children, young people or people with special educational needs, then you should take this into account and commit to a smaller number of questions in the survey and, in addition, consider other methods of collecting feedback. In our experience the optimal number of questions for these groups are starting from 20 to 55.

As funny as it may sound, the most impactful tool at your disposal is the delete button! Every question you ask that you don’t take action on is a waste to you and your respondents. So, as much as you can, review and edit down where possible.

#1Be mindful of the order of questions

Much can be achieved by putting thought into how the survey questions are ordered. This can go a long way towards a surveying experience that is logical and smooth for the respondent.

This could be any key questions/topics or ones that the rest of the survey hinges upon. Think about it this way: if you asked only one question in your survey would you have a clear understanding of the situation and be able to take actionable next steps? In the Edurio pupil safeguarding survey, the “feelings of safety” section was the most important in terms of the research objective – do pupils feel safe, and do they know what to do if they do not? If everyone had said they felt safe, most of the other questions would have been less important.

Group together questions around the same topic. This will help save your respondents time and energy and make sure they don’t have to mentally keep switching between various topics across the survey.

When collecting some general demographic information usually a good place for these questions is at the start of the survey (for example, role, work experience and contract type or your respondent’s phase and year group they’re in).

If you wish to collect additional demographic information from your respondents, perhaps to know more about their protected characteristics like age, gender, ethnic group, disability, etc., we prefer to place these at the end of the survey to minimise the risk of an early drop off due to too many questions of a personal nature at the start, and to allow respondents to consider whether they would like to provide this information given the questions they’ve answered elsewhere in the survey. Ensure that these questions are optional and not required for the completion of your survey.

If there are some sensitive topics or questions that you need to ask, it’s best to leave them towards the end of the survey. Take this into account within different thematic sections as well – it helps to ground the respondent in the topic by starting from general questions and then moving to more in-depth, specific queries.

Ask open questions towards the end of the survey where possible because those generally take more effort to engage with and might pose a risk of respondents dropping off if they encounter having to type a lot early in the survey.

#1Clarify and guide where needed

There are a few additional tools at your disposal that can help make the survey experience more pleasant for those participating. Please note that the ability to include these elements will depend on the survey tool you choose to work with.

Communicate to the respondent what the purpose of the survey is, how their responses will be used (including any relevant information regarding data protection laws), whether the survey is anonymous, how long it’s going to take to fill the survey, and any other administrative information that is crucial to share beforehand.

Make sure to thank your respondents at the end of the survey in an outro text to show that their efforts are appreciated.

Guiding or header texts can be a great way to move the survey along and create a sense of progression through the questions. They can also be used to provide framing for those sections, where it feels necessary. For example, in our EDI survey, we added header texts to all the sections and used it as an opportunity to provide definitions for the concepts we are asking about (diversity, inclusion, recruitment, etc.) and what topics the following questions are going to ask about.

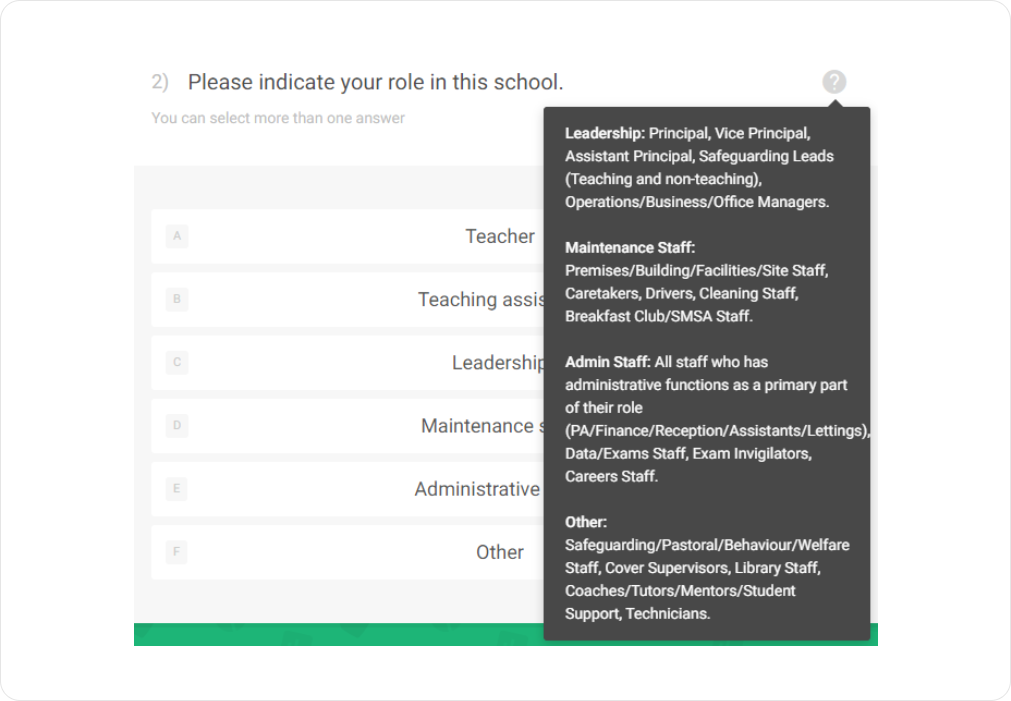

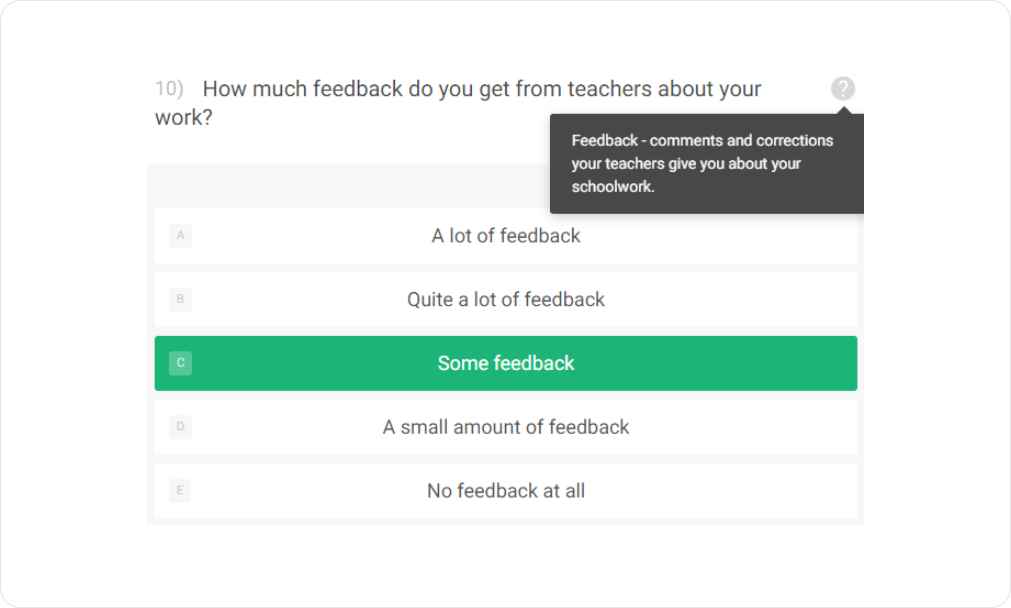

Additional explanations and definitions can also be provided through Question tips (Q-tips). In practice, this can be useful to give more context on the specifics of what you are trying to ask from respondents. For example, add Q-tips on what specific roles you perceive to belong to middle or other leadership positions in schools, as it can depend on the size of an organisation. This can be useful to make sure respondents don’t select the wrong role by accident. Q-tips can also be used to add definitions for more complex terms or specific terms that could be useful for the respondent to have at hand. For example, in a pupil survey, provide definitions for concepts like feedback – what you mean by it in the learning experience context, and bullying which is a widely used word but can be misused or misunderstood.

How do we test a survey?

To make sure you’ve designed a survey that will help serve your organisation’s needs and achieve your goals, it should be tested with your intended respondent group. This enables you to figure out what to revise and amend prior to launching the survey.

First, try thinking about what you would do with the results – would you know how to interpret the responses? Then, pretend to be a respondent for a while and try to “break” your survey by imagining a wide variety of opinions that would need to be captured by these questions – can your survey accommodate all situations? Finally, pilot the survey to test it with actual respondents.

Key terminology

Change agent: Someone who is taking the time to study and guide the successful process.

SLT/Exec Representative: Senior Leadership Team, Executive Team. Depending on the structure of your school or multi-academy or single-academy trust, the leadership team structure may differ.

Stakeholder Representative: Staff, parent or pupil representing the group for your project

Materials & templates

One informational summary resource accompanies this chapter of the hub. You can share and adapt the materials by providing a link to the original documents and indicating if changes were made. You may do so in any reasonable manner but not in any way that suggests that Edurio endorses you or your use.

COMING SOON

Survey design checklist

All additional resources & templates are available for free: The page is password-protected; you may retrieve the password by completing the form above. After form completion, an email containing the password will be delivered to your inbox.

Share with friends and co-workers!